AI Image Generation Looks Incredible. But It Doesn’t Actually Help You Furnish a Room.

Over the past year, AI image generation has exploded.

Over the past year, AI image generation has exploded.

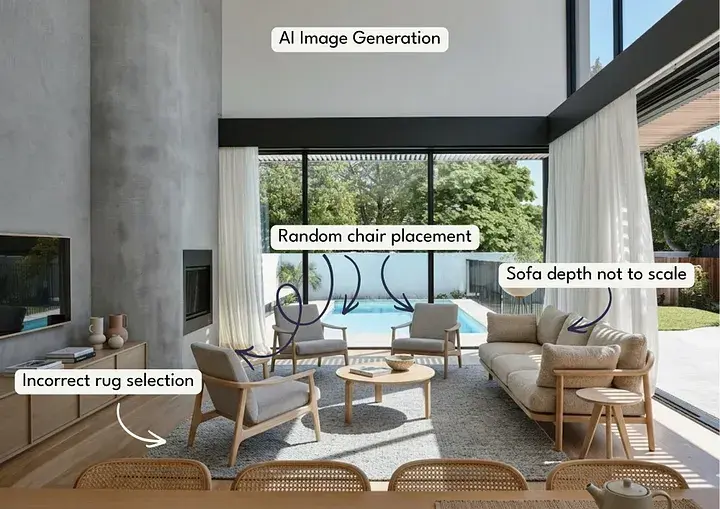

Tools can now produce stunning interior scenes in seconds - perfectly styled living rooms, curated colour palettes, and designer-looking spaces that feel straight out of a magazine.

At first glance, it feels like the future of interior design, but there’s one major problem: none of it actually understands your room.

The sofa might look perfect in the image, the rug might feel beautifully styled, the armchair might complete the space visually. But the moment you try to apply that image to a real home, things start to fall apart.

-1.jpg?width=2000&height=1414&name=Imersian%20-%20See%20It%20In%20Your%20Room%20Interior%20Visualiser%20(2)-1.jpg) The scale is wrong, the placement is random, and you have no easy way to control where anything actually goes.

The scale is wrong, the placement is random, and you have no easy way to control where anything actually goes.

As an interior designer (Indi, founder & CEO of Imersian), this is where generative AI completely misses the point.

Designing a room isn’t just about how something looks, it’s about how things fit together in space - and that’s a very different problem.

The Generative AI Illusion

AI image generators are incredibly good at creating beautiful pictures, but they aren’t actually designing a room.

They’re predicting pixels.

When you ask an AI to generate an interior scene, it doesn’t understand the dimensions of the room, the true size of the furniture, or the spatial relationships between objects. It simply generates an image that looks plausible.

That’s why you often see things like:

- Sofas that are subtly too large for the room

- Rugs that float awkwardly under furniture

- Armchairs positioned in places that would block walkways

- Objects that shift or distort slightly between images

For inspiration, this is fine, but when someone is actually trying to choose furniture for their home, it creates a problem.

Because suddenly the question becomes: “Will this actually work in my space?” And generative AI simply can’t answer that.

Good Interior Design Is Spatial

When I design a room for a client, the first thing we consider isn’t colour or style, it’s space.

How people move through the room, where the focal points sit, how furniture interacts with the architecture, how the proportions of each piece affect the balance of the space.

A 3-metre rug will completely change the feeling of a living room compared to a 2-metre rug. A sofa that’s just 20 centimetres deeper than expected can disrupt the entire layout.

These are the details that determine whether a room feels calm and balanced, or cramped and awkward, and they’re impossible to judge from a generative image alone.

Interior design is fundamentally about spatial relationships - the distance between objects, the scale of furniture relative to the room, the placement of pieces within the layout.

Without understanding those relationships, you’re not really designing, you’re just generating pictures.

The Problem With Letting AI Decide Placement

One of the biggest limitations of generative AI interiors is that the user has no control. You can prompt the AI to place a sofa in a living room, but you can’t decide where that sofa should go.

Should it sit against the wall?

Float in the centre of the room?

Face the windows?

Anchor the seating zone?

Those decisions are essential to how a space functions, and they’re personal.

Different people will choose completely different layouts depending on how they use their space. Some people prioritise conversation areas, others want clear walkways, some want the TV to be the focal point, and others want the view.

When AI decides the layout for you, it removes the most important part of the design process: the ability to experiment. People want to move things around, they want to test ideas, they want to see what happens if the rug is larger, the sofa moves forward, or the armchair rotates slightly.

That’s how real design decisions happen.

Scale Is Everything

Scale is one of the most underestimated challenges in online furniture shopping, a sofa might look perfect in a product photo but once it arrives, it can suddenly feel enormous, or surprisingly small.

Scale is one of the most underestimated challenges in online furniture shopping, a sofa might look perfect in a product photo but once it arrives, it can suddenly feel enormous, or surprisingly small.

This is one of the biggest drivers of returns in furniture retail. In fact, the industry average return rate for furniture sits around 30%, often costing retailers hundreds of dollars per bulky item returned.

And in many cases, the reason is simple: customers couldn’t accurately visualise the size of the product in their space.

Generative AI doesn’t solve this problem, because the furniture in those images isn’t tied to real-world dimensions, it’s just part of the picture.

Without true scale, customers are still guessing, and guessing leads to hesitation, poor decisions, and returns.

For a normal user, changing the position of an object using prompts alone can quickly become an iteration nightmare. Even a simple adjustment, such as moving a chair slightly to the left or placing a table closer to a wall, may require multiple prompt attempts. Each prompt typically generates a completely new image instead of making a precise change to the existing one.

As a result, users often end up trying several prompt variations and generating many images before achieving the desired result. This trial and error process can be time consuming and frustrating, especially for users who expect simple and direct control over the layout.

What Customers Actually Want

When people shop for furniture online, they’re not looking for a perfectly styled AI image, they’re trying to answer practical questions about their own home.

When people shop for furniture online, they’re not looking for a perfectly styled AI image, they’re trying to answer practical questions about their own home.

Questions like:

- Will this rug fit under my sofa properly?

- Is this coffee table too large for the space?

- Where should the armchair go?

- How will these pieces look together?

And most importantly: Will this actually work in my room?

To answer that, customers need two things:

- Control

- Accuracy

They need to be able to place items where they want them, and they need to trust that what they’re seeing reflects reality.